Fast.ai tabular data pass np.array6/24/2023

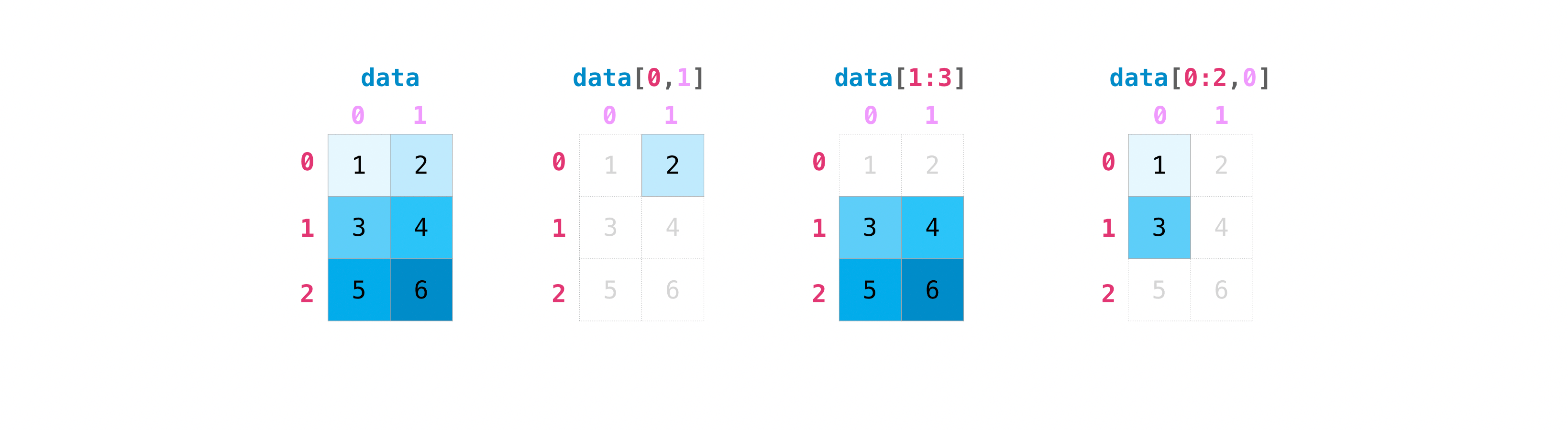

When I run learn = tabular_learner(dls, y_range=(0,1), layers=, n_out=1, loss_func=F.binary_cross_entropy) it works, but learn.fit_one_cycle(5, 1e-2) throws the same error as above. It's super helpful and useful as you can have everything in one place, encode and decode all of your tables at once, and the memory usage on top of your Pandas dataframe can be very minimal. Thus if you type help (fastai.untardata), you notice type hints. What is fastai Tabular A TL DR When working with tabular data, fastai has introduced a powerful tool to help with prerocessing your data: TabularPandas. The only supported types are: float64, float32, float16, comple圆4, complex128, int64, int32, int16, int8, uint8, and bool. fastai-1 Getting started with Fast.ai As of 2019, fast.ai supports 4 types of DL applications computer vision natural language text tabular data collaborative filtering Fast.ai uses type hinting introduced in Python 3.5 quite heavily. I get the “Could not do one pass in your dataloader, there is something wrong in it” warning, and when I run dls.show_batch(3) it throws TypeError: can't convert np.ndarray of type numpy.object_. To = TabularPandas(train, procs, cat_names, cont_names, y_names="label", y_block=MultiCategoryBlock(), splits=splits)Īll of the above works fine, but when I run I have found that using embeddings for categorical variables results in significantly better. One of FastAI biggest contributions in working with tabular data is the ease with which embeddings can be used for categorical variables.

Splits = RandomSplitter()(range_of(train)) This post is a tutorial on working with tabular data using FastAI. train is the training data (800 columns) and train_targets are the labels (206 columns, all values are either 0 or 1): cat_names = Ĭont_names = įor i, row in enumerate(train_ertuples()): If you have control over the creation of jsoninput it would be better to write out as a serial array. Our inputs immediatly pass through a BatchSwapNoise module, based on the Porto Seguro Winning Solution which inputs random noise into our data for variability After going through the embedding matrix the 'layers' of our model include an Encoder and Decoder (shown below) which compresses our data to a 128-long vector before blowing it back up in. Load best model losses np.array() best np.argmin(losses. I am doing multilabel classification on tabular data. v x.values extracts the values as a numpy array if (v.dtype np.object): deals with arrays whose item type is itself a numpy array nbrows v.shape 0 if nbrows 1: v v.item () only one row, cannot stack else: v np.vstack (v.squeeze ()) return astensor (v, kwargs) usekwargsdict (dtypeNone, deviceNone, requiresg. The simplest answer would just be: numpy2darrays np.array (dict 'rings') As this avoids explicitly looping over your array in python you would probably see a modest speedup. In this notebook, we used a basic fastai TabularLearner to generate. I’ve seen various blog posts and a few posts on this forum about this topic but none have answered my question.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed